Design of Experiments

Learn Fast, Move Even Faster

Val Yonchev

What Is Design of Experiments?

All our ideas about new products, new services, new feature, any changes we can introduce to make things better (growth, revenue, experience, etc.) start as an idea, a hypothesis, an assumption. In traditional approaches one will place the bets based on some form of ROI analysis or investments analysis, making further assumptions in the process.

The Design of Experiments is an alternative to this approach, in which we are trying to validate as many of those important ideas/hypothesis/assumptions as early as possible. Some of those object of experiments we may want to keep “open” until we get the real world proof, which can be done through Split Testing for example.

The Design of Experiments is the practice we use to turn ideas, hypothesis and/or assumptions into concrete well defined set of experiments which can be carried out in order to validate those ideas, hypothesis and assumptions, i.e. provide us with valuable learning.

Why Do Design of Experiments?

- Design of Experiments is a fail safe way to advance a solution and learn fast

- Design of Experiments can provide a quick way to evolve a product

- Design of Experiments helps drive innovation in existing as well as new products

- Design of Experiments enables autonomous team to deliver on leadership intent by placing small bets

- Design of Experiments is essential for realising the Build-Measure-Learn loop

How to do Design of Experiments?

You may need more than one experiment for each item (idea, hypothesis, assumption). An experiment usually only changes a small part of the product or service in order to understand how this change could influence our goals (Target Outcomes). The number of experiments is really defined based on what you want to learn and how many distinctive changes you will be introducing.

Looking back after the experiment you want to be able to identify what worked and what did not. The analysis of the experiments is essentially used to drive the direction of the product/service you are building. In other words the experiments are one of the mediums, which allow you to pivot.

You need data for this analysis and it can be both qualitative and quantitive. As in any analysis the quality of the data is critical for the quality of the conclusions and this quality is driven by the design of the experiment. When you design the experiment, you need to envision the ways you can measure outcomes, ways to collect the data, i.e. measurements methods.

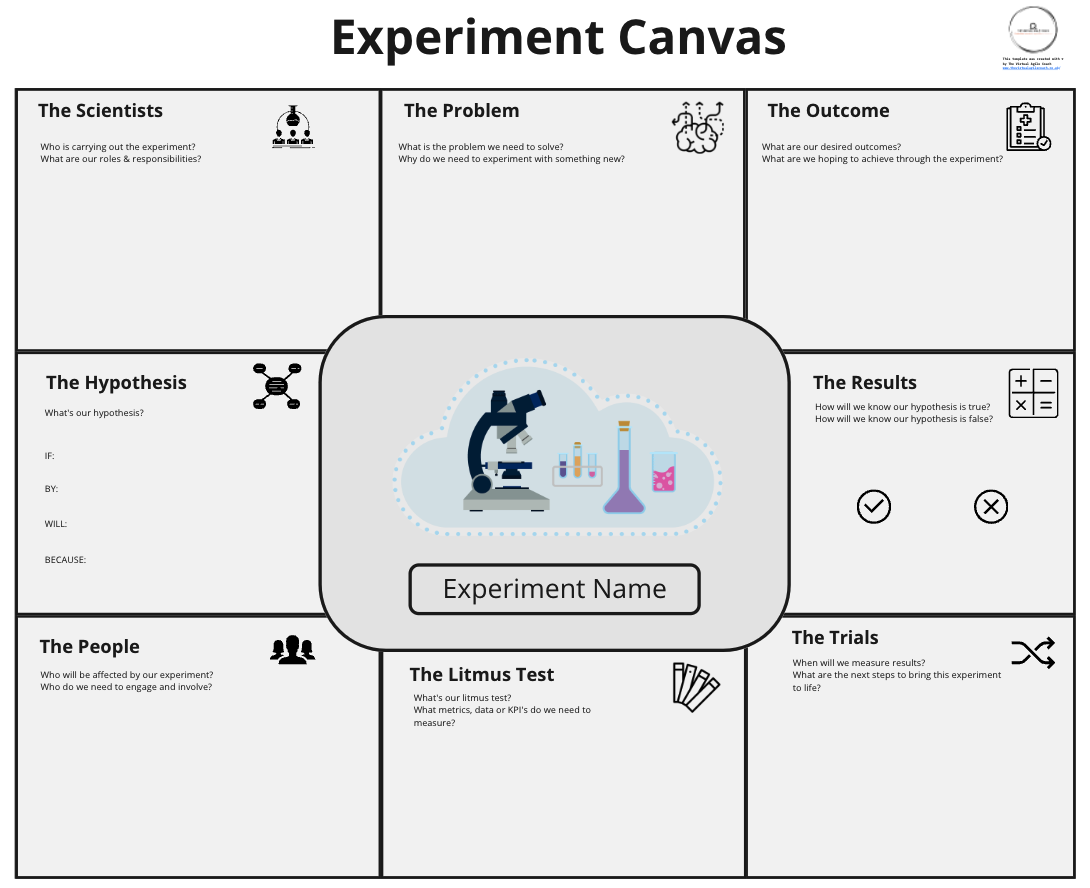

The format of the experiment documentation is really not that important and there are multiple ideas about canvases you can find out there. It is the content that is important and how well you have designed the experiment, i.e. does it allow for many opportunities where the outcome is too ambiguous to judge.

Good experiments need the following minimum details to be successful:

- Hypothesis: formulated as a sentence, often expressing an assumption

- Current Condition: What is the situation now (as measurable as possible)?

- Target Condition: What are we trying to achieve (as measurable as possible)?

- Obstacles: What could prevent us from achieving the target condition? What could cause interference or noise.

- Pass: How can we define positive pass? The target condition may not always be achieved, then what do we consider a significant enough change to conclude the experiment is confirming the hypothesis, i.e. passing with positive outcome.

- Measures: How shall we measure the progress?

- Learning: Always capture outcomes and learning, which should ideally lead to more experiments of higher order

Once described those experiments are implemented, tracked and measured in order to analyse the outcomes. In an ideal world an experiment will have a binary success/fail criteria, but most often we would need to analyse data using statistical methods to find out if there is a significant correlation between the change introduced with the experiment and the change in the target outcome.

NOTE: Successful experiments are not experiments that have proven our assumption as correct. Successful experiments are those that provide valid and reliable data which shows a statistically significant conclusion.

Why & How to combine it with other practices?

Design of experiment is nothing without execution, which is the main reason for combining this practice with others.

Experiments need to be prioritized as we can only do so much in the time we have. Combining this practice with the various prioritisation matrices as Effort-Impact or How-Now-Wow helps a lot.

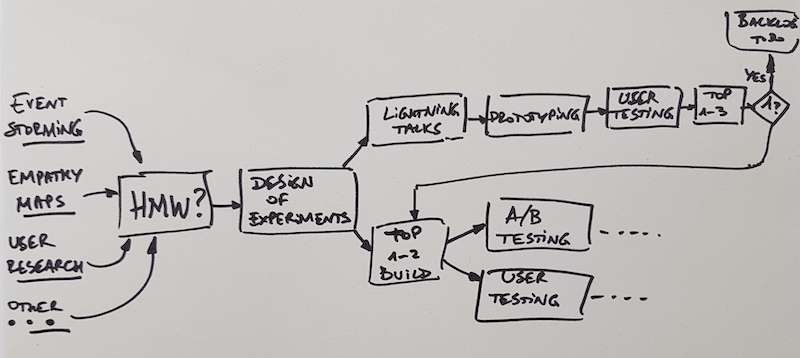

Experiments are often realised first through Rapid Prototyping, Prototyping and are subject to User Research & Testing. This combination provides for fast learning even a single line of code is written.

Experiments may be made in production as well. In fact, tests in production are the ultimate form of validation of ideas/hypothesis/assumptions as it is supported by real data or real customer actions. The Split Testing practice provides super valuable combination.

Often you may have a set of experiments go through a sequence of Rapid Prototyping / Prototyping with User Research and then a subset of “successful” experiments would be carried forward to production to pass through Split Testing.

Look at Design of Experiments

Links we love

Check out these great links which can help you dive a little deeper into running the Design of Experiments practice with your team, customers or stakeholders.